Hi guys, I need to import a bulk CSV file to CUBRID Database Management. Because the speed of the process is very low, I decided to download the CUBRID Migration Toolkit (CMT). Then, I opened the CSV file in MySQL.

The process took 30 minutes before it finished. I attempt to create a CUBRID table and then transmit it to a MySQL Database to CUBRID Database. When done with the transmission, the table is already indexed.

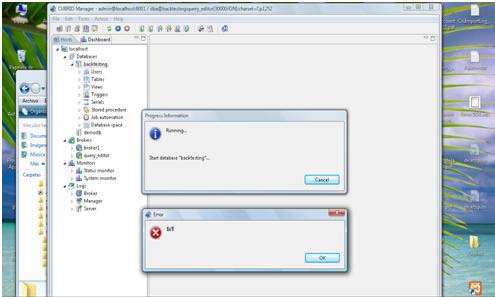

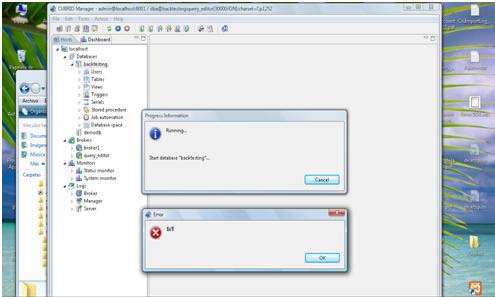

And now, when I am trying to run the Database, I keep getting an error shown below:

Progress Information

Running.

Start database "backtesting".

Error importing large CSV file to CUBRID

Hi,

Here is a good and easy solution for importing large data into a CURBID database .

Instead of using the default "Import Data…" function in the CM, for large data it is better to import them using "Prepared Insert" statements.

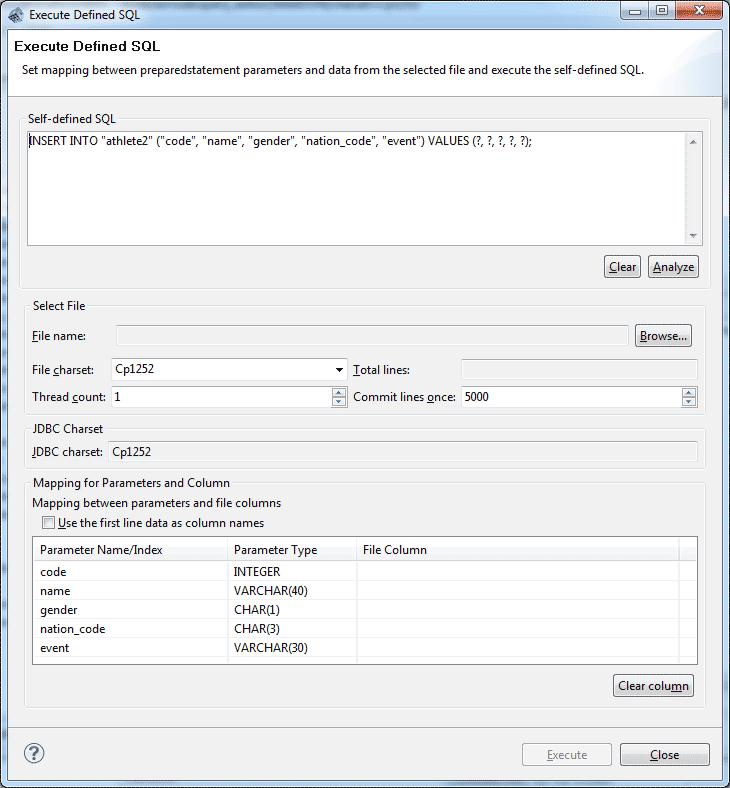

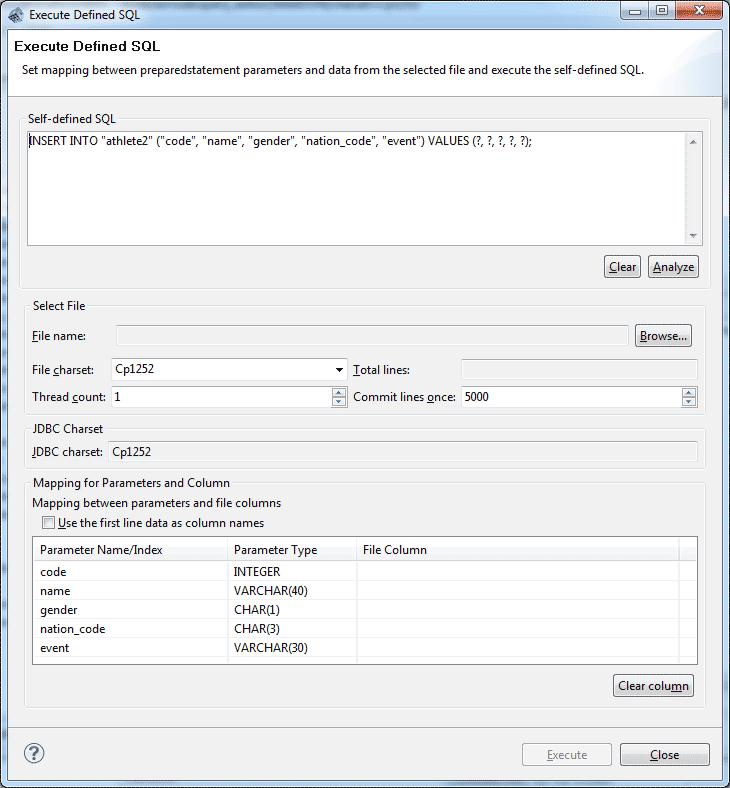

CM provides this handy solution which will allow you to insert data in multiple threads. Then "Insert by read file". In the CM, right click on the table, and instead of "Import Data." choose "Execute Prepared SQL". The following window will Popup.

Here you will see the Prepared SQL Statement which was automatically created for your target table including the parameter name/index and its type in the bottom of the window. So, just select the filename and indicate the number of threads to use (default 1). It is recommended to use no more than 7 or 8 threads.

Now to boost the import performance it is recommended to remove the indexing before importing, which can be later restored after all the data has been imported.

So, first create your table, but do not add any UNIQUE, PRIMARY KEY, or INDEX to any of the columns, because they all will trigger the indexing every time a new record is inserted during the import process.

Then do the above mentioned "Prepared SQL" importing. Once the import is done, you can add the PRIMARY KEY, UNIQUE or INDEX to the columns you want. This will trigger the indexing only once.

After using there steps you can improve your data import performance.